|

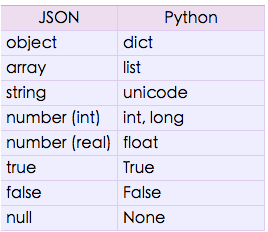

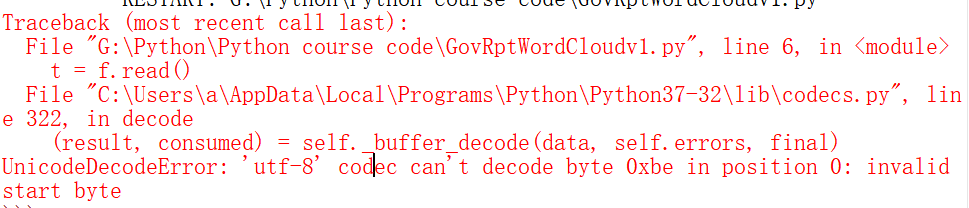

In 3.x, the string_escape codec is replaced with unicode_escape, and it is strictly enforced that we can only encode from a str to bytes, and decode from bytes to str. To get a unicode result, decode again with UTF-8. The result is a str that is encoded in UTF-8 where the accented character is represented by the two bytes that were written \\xc3\\xa1 in the original string. > print 'Capit\\xc3\\xa1n\n'.decode('string_escape')

In 2.x, a string that actually contains these backslash-escape sequences can be decoded using the string_escape codec: # Python 2.x

Instead, just input characters like á in the editor, which should then handle the conversion to UTF-8 and save it. We can see this by displaying the result: # Python 3.x - reading the file as bytes rather than text, Those are 8 bytes and the code reads them all. Writing Capit\xc3\xa1n into the file in a text editor means that it actually contains \xc3\xa1. \x is an escape sequence, indicating that e1 is in hexadecimal. In the notation u'Capit\xe1n\n' (should be just 'Capit\xe1n\n' in 3.x, and must be in 3.0 and 3.1), the \xe1 represents just one character. > print > file('f3','w'), simplejson.dumps(ss) Maybe I should just JSON dump the string, and use that instead, since that has an asciiable representation! More to the point, is there an ASCII representation of this Unicode object that Python will recognize and decode, when coming in from a file? If so, how do I get it? > print simplejson.dumps(ss) What I'm truly failing to grok here, is what the point of the UTF-8 representation is, if you can't actually get Python to recognize it, when it comes from outside. What does one type into text files to get proper conversions? What am I not understanding here? Clearly there is some vital bit of magic (or good sense) that I'm missing. So I type in Capit\xc3\xa1n into my favorite editor, in file f2.

# The string, which has an a-acute in it. I'm having some brain failure in understanding reading and writing text to a file (Python 2.4).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed